Table of Contents

The LQs vs. AaCR Amazon listing gap is where most audits fail, and where your margin quietly disappears. For $3M+ brands with complex catalogs and variant-heavy assortments, “optimized” is not a diagnosis. It’s a placeholder.

Amazon evaluates every listing twice — once by the shopper, once by the machine.

Most audits only check one. That gap is where the margin disappears. This guide breaks down LQS and AACR: what each measures, where they diverge, and how to sequence fixes that actually move the needle.

If your catalog has plateaued, the fix likely isn’t more content. It’s the right diagnosis. Book Your ROI Forecast

Why Your Listing Needs Two Scores to Win

A single listing score is lazy thinking — and it’s why brands with “optimized” listings still plateau.

Amazon does not judge your product page as one unified object. Shoppers judge whether the offer feels credible, clear, and worth the money. Amazon’s systems judge whether the listing is structured well enough to interpret, classify, and surface across search, recommendations, and AI-assisted shopping flows.

Most listing audits only check one of those two things. That is the gap this guide fills. If you need to improve your Amazon catalog across both layers, you need a diagnostic that separates human friction from machine friction, not a checklist that treats them as the same problem.

That split explains a problem sellers misdiagnose every day. The listing looks polished, yet traffic stalls. Or it ranks, gets clicks, and still fails to convert because the page does not build trust fast enough. One score hides that distinction. Two scores expose it.

LQS and AACR solve different problems. LQS tells you whether a human is likely to understand and believe the listing. AACR tells you whether Amazon’s machine layer can read the page cleanly enough to support discovery and add-to-cart behavior. Treating those as the same job is how brands waste months rewriting bullets while the underlying issue sits in entity clarity, media quality, or missing context.

The old playbook rewarded surface optimization. More keywords. More bullets. More images. That approach breaks once listings are evaluated by both people and systems with different standards. Even your visual stack now carries more weight because it shapes shopper confidence and machine interpretation at the same time. Image quality affects both shopper confidence and machine interpretation. That means creative decisions inside your listing are also infrastructure decisions.

A listing that persuades humans but confuses Amazon is fragile. A listing that satisfies Amazon but fails to persuade humans is dead weight.

This is the logic behind Adverio’s dual-scoring model. One score for human perception. One score for machine readiness. If you want profit, stop asking whether your listing is “optimized” and start asking which audience it is failing.

LQS: The Human Perception Score — What It Measures

LQS measures how convincing your listing feels to a human shopper. It runs on a 1–10 scale, with 7+ considered the threshold for a listing in decent shape. The score is built from four weighted areas: copy (26 points), media (23 points), reviews (12 points), and offer (4.75 points).

The formula, component breakdown, and how to improve each area are covered in full in two existing posts; read these before using the diagnostic sequence below:

The short version: if LQS is weak, the page is failing the human shopper. Better images won’t rescue a trust problem. Better bullets won’t fix a media gap. Fix the right component first.

What this guide adds is the next diagnostic step — what to do when LQS is strong and performance is still flat. That is the AACR problem.

This is the layer most listing audits miss entirely — and the most common reason a polished listing still stalls. For the full strategic context on how Rufus and COSMO evaluate listings,

see Adverio’s COSMO and Rufus strategy guide

Understanding AACR The Machine Readiness Score

AACR, or Agent Add-to-Cart Readiness, scores how well Amazon’s machine layer can interpret, classify, and act on your listing. LQS judges the human reaction. AACR judges whether the system can confidently use your page to match intent and support add-to-cart behavior.

That distinction matters because a listing can look polished to a shopper and still fail structurally inside Amazon’s AI stack. Adverio uses AACR to expose that hidden layer. If LQS explains why people hesitate, AACR explains why the algorithm never gave you a real shot in the first place.

What AACR measures that LQS does not

AACR focuses on machine usability.

It examines whether the listing gives Amazon enough structured meaning to understand the product, connect it to nuanced queries, and keep it eligible across category rules and content constraints. That includes three areas:

-

Rufus query coverage

Can the listing respond to conversational shopping intent with clear, machine-readable meaning? -

Cosmo entity completeness

Does the page include the attributes, relationships, and category signals Amazon expects for this product type? -

Compliance readiness

Is the content clean enough to avoid suppression risk, misclassification, or reduced distribution?

If you’re working on Amazon SEO strategies, stop treating keyword presence as the goal. Machines do not reward word stuffing. They reward usable meaning.

How Rufus and Cosmo read your listing

Rufus is intent-facing. Cosmo is structure-facing.

Rufus looks for whether your listing can answer the kind of messy, natural-language questions shoppers now ask. Cosmo looks for whether the product data is complete, logically organized, and semantically consistent. That is why a simple, precise sentence often beats a bloated one packed with repeated keywords.

Human persuasion and machine interpretability are related, but they are not the same diagnostic job. A listing can read clearly to a shopper and still be misread by the algorithm. That’s the gap most audits don’t catch.

What a strong AACR looks like in practice

A high-AACR listing removes ambiguity.

The product type is obvious. Key attributes are explicit. Variant relationships make sense. Use cases are stated in plain language. Category compliance is clean. The content gives Amazon fewer reasons to guess, and guessing is what kills distribution.

Strong AACR does not make the page feel robotic. It makes the page legible to the systems that decide when, where, and for which query your product appears.

LQS vs AACR Where They Overlap and Diverge

Sellers who force one score to do two jobs keep misreading their listing.

LQS and AACR overlap because both affect sales. They diverge because they judge different audiences. One asks whether a shopper trusts and understands the page. The other asks whether Amazon’s systems can classify, match, and distribute it correctly. If you want to uncover Amazon ranking leaks, treat those as separate diagnostic questions.

Uxia’s guide on synthetic users captures the same core problem. Different evaluators expose different defects. A page can persuade a person and still confuse a machine. It can also be machine-legible and still fail to convert.

Here is the practical split for lqs vs aacr amazon listing analysis:

| Listing Dimension | Measured by LQS Human Perception | Measured by AACR Machine Readiness |

|---|---|---|

| Title quality | Yes. Clarity, appeal, readability | Yes. Semantic clarity, usable structure |

| Image stack | Yes. Trust, context, persuasion | Limited. Only where assets reinforce listing completeness and consistency |

| Review volume | Yes. Social proof and trust | Indirectly. Review patterns can shape confidence signals around the offer |

| Keyword presence | Yes. Relevance and readability | Yes. Semantic fit and query coverage |

| Semantic entity coverage | No | Yes |

| Conversational query coverage | No | Yes |

| Price-to-value signal | Yes | Yes, where offer structure and reliability support add-to-cart confidence |

The overlap matters, but the scoring logic is different.

Clear titles help both. So does consistent benefit language. So does a coherent offer. But once you move past those shared inputs, the gap gets obvious fast. LQS punishes confusion, weak proof, and poor visual selling. AACR punishes ambiguity, missing attributes, and shallow semantic coverage.

That distinction is the whole point of Adverio’s dual-scoring model. Standard listing reviews blur human persuasion and machine readability into one vague grade. That hides the underlying failure mode. Two scores force a cleaner diagnosis, and cleaner diagnosis is what leads to profit.

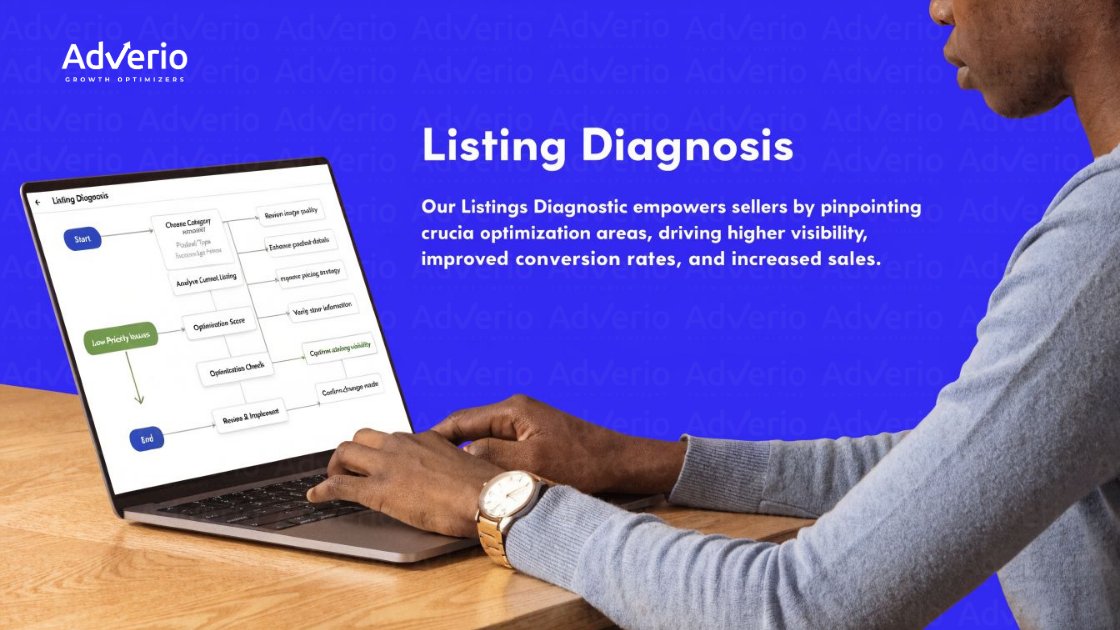

Using the Dual Scores for a Listing Diagnosis

Running a proper lqs vs aacr amazon listing diagnostic takes the guesswork out of where to fix first.

Stop treating listing diagnosis like a generic optimization checklist. A weak Amazon listing usually fails in one of two places. The human layer or the machine layer. If you do not separate them, you waste time fixing copy on a discoverability problem or patching attributes on a persuasion problem.

Start with the score gap.

If LQS sits below 6.5, fix the shopper experience first. Clean up the title, tighten the image stack, remove trust friction, and make the offer easier to understand. If LQS is strong and performance still stalls, shift your attention to AACR. That is where hidden structural failures show up, especially in catalogs with messy variants, incomplete attributes, or weak query coverage.

Large catalogs make this harder, not easier. Parent-child issues, inconsistent attribute logic, and sloppy offer structure can drag down performance even when the page looks polished to a shopper. That blind spot is exactly why one score is not enough.

For brands managing 250+ SKUs, an Amazon account management approach that ties catalog governance to listing performance is often the actual unlock.

Use this order:

-

Check LQS first

A weak LQS points to visible conversion friction. Fix what shoppers see and feel. -

Check AACR second

If the listing looks persuasive but still underdelivers, inspect semantic coverage, attribute completeness, and machine readability. -

Review offer structure

Prioritize this step for apparel, bedding, automotive, supplements, and any catalog with heavy variant complexity. -

Match the problem to the fix

High LQS with low AACR means the machine does not interpret the offer cleanly. Low LQS with high AACR means shoppers do not trust or want the page enough to buy.

Teams that want to uncover Amazon ranking leaks should use this sequence every time. It cuts through vanity audits and forces a real diagnosis. For brands with heavy catalogs, pairing this with a structured Amazon catalog management review is how you stop guessing and start fixing the layer that is blocking profit.

A Worked Example of the Dual Scoring Diagnostic

Take a hypothetical premium weighted blanket listing.

Listing A with strong LQS and weak AACR

This listing has polished images, strong bullets, solid reviews, and clear consumer-facing copy. The page feels premium. Shoppers who land convert reasonably well.

But the listing underperforms because the semantic layer is thin. Variant attributes are messy. Material, weight, use-case, and size relationships aren’t expressed with enough structure. Rufus-style conversational coverage is weak. The machine understands less than the shopper does.

That listing can look healthy in a traditional audit and still miss add-to-cart opportunities.

Listing B with weaker LQS and stronger AACR

This listing is structurally cleaner. Attributes are complete. Variant logic is tighter. The machine can interpret the offer well.

But the page is ugly. Images are generic. Bullets don’t resolve objections. The shopper doesn’t feel enough confidence to buy.

This listing gets found more cleanly than Listing A, but it fails later in the funnel.

What the diagnosis tells you

The wrong operator response is to give both listings the same optimization checklist.

The right response is targeted:

-

Listing A needs machine-readiness fixes around semantic coverage, query resolution, and variant logic.

-

Listing B needs human-facing fixes around trust, differentiation, and conversion persuasion.

A single score hides the reason a listing fails. Two scores expose it.

How Adverio Uses Dual Scoring to Drive Growth

Adverio runs LQS and AACR together in every listing audit, then ranks fixes by revenue impact. No guessing. No “optimize everything” checklists.

If AACR is the constraint, the work goes into semantic structure, attribute clarity, and variant logic. If LQS is the constraint, the focus is images, objection handling, and trust.

That sequencing matters because brands with 250+ SKUs cannot afford to spend budget on the wrong layer. Adverio’s Amazon listing optimization system is built around exactly this diagnostic.

It separates machine friction from human friction and targets the actual bottleneck.

That is how listing work stops being overhead and starts becoming a margin driver. Ready to find out which layer is costing you? Book Your ROI Forecast

Frequently Asked Questions

Can I calculate LQS myself?

Yes, at least directionally. LQS has a defined structure and formula, so experienced operators can estimate where a listing is weak.

The challenge isn’t getting a number. It’s interpreting what low component scores mean for conversion behavior.

Is AACR an official Amazon metric?

No. AACR is a performance framework used to evaluate machine-readiness and add-to-cart probability through the algorithmic layer.

It’s useful because standard Amazon metrics don’t explain why a listing can look healthy and still stall.

Which matters more, LQS or AACR?

Neither wins on its own. LQS matters when the page isn’t convincing. AACR matters when the listing isn’t machine-ready.

If one is broken, the other can’t carry the whole system.

How do these scores relate to ACoS and TACoS?

They are upstream diagnostic signals.

Better listing quality and stronger machine readiness support more efficient traffic and stronger conversion, which improves how ad spend performs over time.

What kind of brands need dual scoring most?

Brands with complex catalogs, variant-heavy assortments, and plateaued growth need it most.

The more SKU complexity you manage, the more likely it is that a standard content audit misses the underlying leak.

Read Next

References

-

Jungle Scout on Listing Quality Score and Listing Optimization Score

-

Adverio on listing quality blind spots and offer quality weighting

-

Chris Turton on why Amazon listings aren’t ranking and the LQS to AACR gap

Your listing isn’t underperforming because Amazon is mysterious. It’s underperforming because you’re probably measuring the wrong layer. Adverio helps brands diagnose whether the problem is human conversion friction, machine-readiness failure, or both, then turn that diagnosis into a profit plan. If your catalog has plateaued, Book Your ROI Forecast